Schedule (Tentative)

| Time | Session |

|---|---|

| 08:00 – 08:05 | Opening Remarks – Welcome & Workshop Overview |

| 08:05 – 08:20 |

Opening Keynote

Keynote

Dr. Walter Zimmer University of California Los Angeles (UCLA) & Technical University of Munich (TUM), USA

AbstractAutonomous driving in urban environments is fundamentally limited by the range, occlusions, and failure modes of vehicle-only perception. This opening keynote shows research advances in cooperative roadside–vehicle perception by fusing multi-modal data from onboard sensors and intelligent roadside infrastructure via V2X communication to extend situational awareness beyond line of sight. The proposed methods improve real-time 3D object detection and tracking in dense traffic and are supported by new large-scale, multi-modal datasets for benchmarking cooperative perception in real-world urban settings. By integrating recent advances in foundation models, including vision-language models, the work further enables semantic understanding of complex traffic scenes, laying the groundwork for AI-driven urban digital twins and safer, more efficient intelligent transportation systems. Speaker BioDr. rer. nat. Walter Zimmer is a post-doctoral researcher at the University of California Los Angeles (UCLA) and guest researcher at the Technical University of Munich (TUM). He received his Ph.D. from the Technical University of Munich (TUM) in 2025. His research focuses on cooperative autonomous driving, 3D perception and 3D foundation models. He has authored over 40 publications at top venues such as CVPR, ICCV, ECCV, ICML, NeurIPS, and T-PAMI. Dr. Zimmer previously worked as an Autonomous Systems Engineer at the STTech startup and research assistant at Siemens AG. His work has earned multiple awards, including the IEEE ITSS Best Student Paper Award 2023 and IEEE ITSS Best Dissertation Award 2025. |

| 08:20 – 08:40 |

Keynote 1

Keynote

Dr. Balajee Kannan Motional, USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 08:40 – 09:00 |

Keynote 2

Keynote

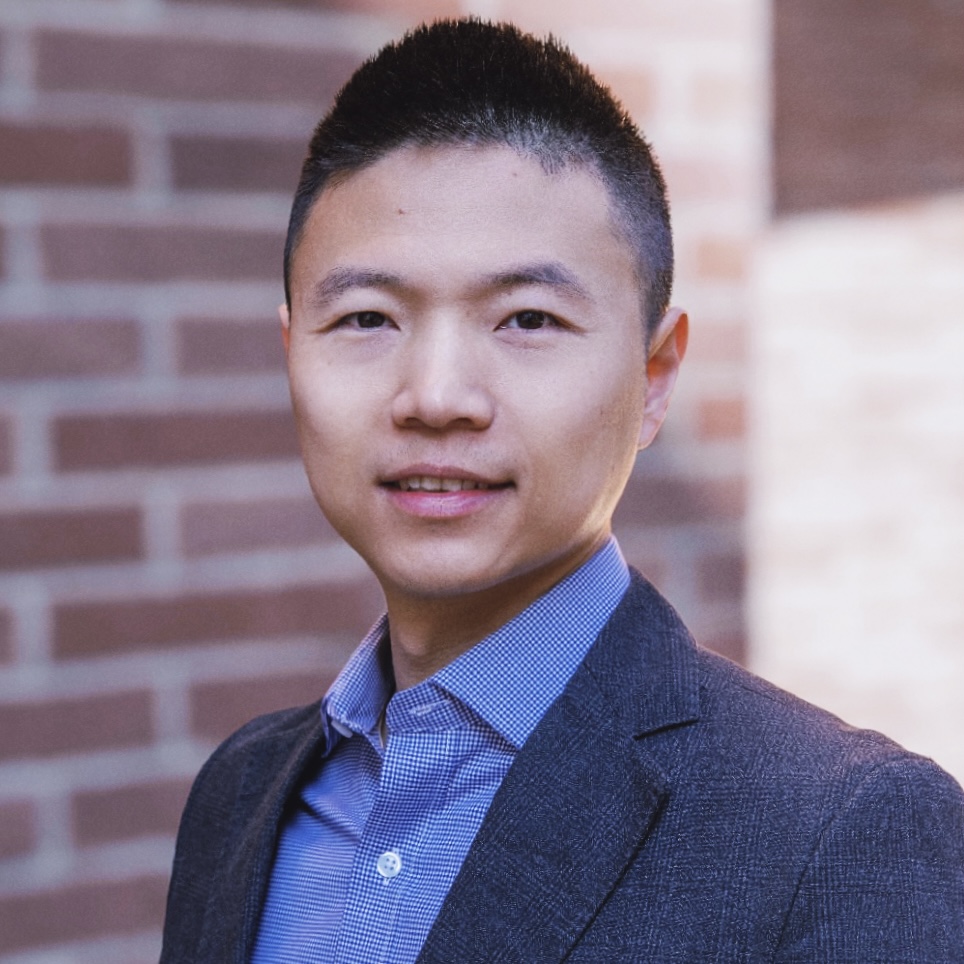

Prof. Dr. Jiaqi Ma University of California Los Angeles (UCLA), USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioDr. Jiaqi Ma is Director of the FHWA/UCLA Center of Excellence on New Mobility and Automated Vehicles, Professor at the UCLA Samueli School of Engineering, Director of the UCLA Mobility Lab, and Associate Director of the UCLA Institute of Transportation Studies. He has led and managed numerous transportation research projects funded by the U.S. Department of Transportation, National Science Foundation, state Departments of Transportation, and other federal, state, and local agencies. His research spans automated driving, mobility digital twins, multimodal sensing, cooperative perception and decision-making, robotics, spatial data mining, simulation, and reasoning. |

| 09:00 – 09:20 |

Keynote 3

Keynote

Dr. Mingxing Tan Waymo, USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 09:20 – 09:40 |

Keynote 5

Keynote

Dr. Jamie Shotton Wayve, Vancouver

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 09:40 – 10:00 |

Keynote 4

Keynote

Mustafa Bal Nomadic AI, USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 10:00 – 10:50 | Poster Session I (ExHall A) & Coffee Break Posters Coffee Break |

| 10:00 – 10:30 | Nomadic AI — Live Demo Demo |

| 10:50 – 11:10 |

Keynote 6

Keynote

Prof. Dr. Marco Pavone Stanford University & NVIDIA, USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 11:10 – 11:30 |

Keynote 7

Keynote

Dr. Phil Duan Tesla, USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 11:30 – 12:00 | Panel Discussion I: Industry Track Panel |

| 12:00 – 13:00 | Lunch Break & Networking |

| 13:00 – 13:20 |

Keynote 8

Challenge

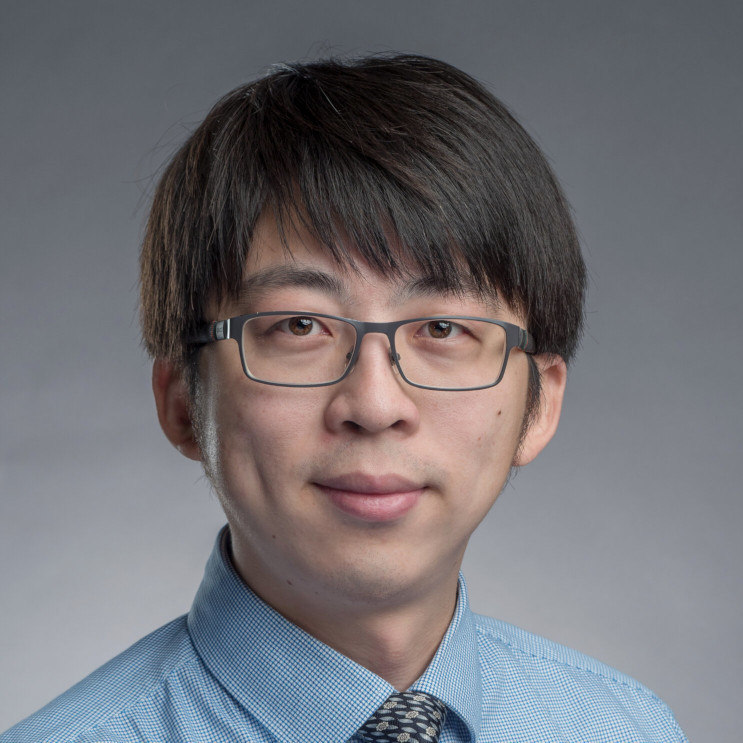

Dr. Xuewei (Tony) Qi Motional AD LLC, USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 13:20 – 13:40 |

Keynote 9

Keynote

Prof. Dr. Manmohan Chandraker UCSD & NEC Labs, USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 13:40 – 14:00 |

Keynote 10

Keynote

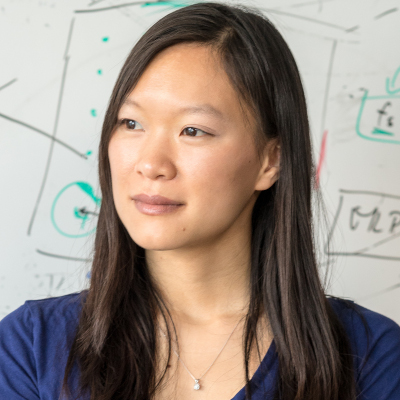

Prof. Dr. Sharon Li University of Wisconsin-Madison, USA

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 14:00 – 14:20 |

Keynote 11

Keynote

Prof. Dr. Holger Caesar Delft University of Technology, Netherlands

AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 14:20 – 14:40 |

Keynote 12

Keynote

Prof. Dr. Daniel Cremers Technical University of Munich, Germany AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 14:40 – 15:00 |

Keynote 13

Keynote

Prof. Dr. Angela Dai Technical University of Munich, Germany AbstractAbstract will be announced closer to the workshop date. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 15:00 – 16:00 | Poster Session II & Coffee Break Posters Coffee Break |

| 16:00 – 16:30 | Panel Discussion II: Academic Track Panel |

| 16:30 – 16:40 |

Oral Paper Presentation 1: Calibration-Free View-Agnostic Monocular 3D Object Detection for Urban Scenes

Oral

Dr. Mehmet Kerem Turkcan Columbia University, USA

AbstractCooperative vehicle-to-everything (V2X) perception requires 3D object detection across heterogeneous cameras whose intrinsic parameters may be unavailable, imprecise, or drifting. We present UrbanOmniDetect, a calibration-free monocular 3D object detection framework that predicts ordered 2D projections of 3D bounding box vertices from a single RGB image. By formulating 3D detection as keypoint regression within a backbone-agnostic single-stage architecture, a single model generalizes across ego-vehicle, infrastructure, and aerial viewpoints without camera intrinsics or scene priors. We construct the UrbanOmniView dataset by unifying KITTI, DAIR-V2X, and high-fidelity Unreal Engine 5 synthetic data (4K, ray-traced) spanning ground-level, traffic-surveillance, and drone perspectives. A homography-based bird's-eye-view head maps predicted ground-contact keypoints to a top-down plane, enforcing geometric consistency without camera parameters. We experiment with YOLO11 backbone variants at multiple scales and augmented feature pyramid levels. On the KITTI benchmark, our best model achieves AP_3D = 30.71 (Moderate) and AP_BEV = 35.19 at IoU >= 0.7, outperforming calibration-dependent baselines on the Moderate and Hard splits, with an mAP_50:95 of 0.751 and 10 ms inference on an A100 GPU. Calibration-dependent baselines degrade catastrophically under small intrinsic perturbations, whereas our formulation is invariant by construction. UrbanOmniDetect provides a deployment-ready framework for autonomous driving, drone surveillance, and V2X cooperative perception. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 16:40 – 16:50 |

Oral Paper Presentation 2: 4-D Radar Meets LiDAR and Camera: Cooperative Perception under Adverse Weather

Oral

Melih Yazgan Columbia University, USA AbstractCooperative perception is critical for autonomous driving but remains highly fragile when cameras and LiDAR degrade in adverse weather. We address this critical limitation by elevating 4D imaging radar to a first-class modality within collaborative frameworks and introducing the first Doppler-guided spatial attention mechanism for multi-agent fusion. Our approach extends two representative backbones: (i) radar substitutes LiDAR to form a radar-camera pipeline, and (ii) radar complements LiDAR to form a LiDAR-radar pipeline. A Doppler-derived mask dynamically emphasizes moving objects while preserving static context, significantly enhancing robustness in cluttered and low-visibility scenes. To support comprehensive evaluation, we release radar-augmented benchmarks (OPV2V-R and Adver-City-R) featuring physics-based LiDAR degradation. Experiments demonstrate that substituting LiDAR with radar nearly doubles baseline detection accuracy in fog, while our Doppler-guided attention provides the essential refinement needed to achieve high precision. Furthermore, our LiDAR-radar fusion equipped with this attention mechanism achieves state-of-the-art robustness under heavy rain and fog. Additional validation on the real-world TruckScenes dataset confirms that our Doppler-guided radar modules transfer effectively beyond simulation, firmly establishing 4D radar as a primary modality for all-weather collaborative perception. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 16:50 – 17:00 |

Oral Paper Presentation 3: Rethinking Intermediate Module Utilization in V2X End-to-End Autonomous Driving

Oral

Yiming Kan Technical University of Munich (TUM), Germany AbstractEnd-to-end autonomous driving has progressed rapidly, with vehicle-side models relying on perception or ego status. UniV2X has extended this paradigm to the Vehicle-to-Everything (V2X) domain, where the broader perceptual scope of V2X offers a more revealing context for revisiting the effective utilization of intermediate modules. Prior work has examined the utility of intermediate modules in vehicle-side models, with studies suggesting that historical trajectories or current ego status alone may suffice for achieving competitive performance on open-loop datasets. Our paper aims to revisit this assumption in the V2X setting. Using the UniV2X model as the baseline and the V2X-Seq dataset as the testbed, we examine the contribution of intermediate modules to the final planning output and explore the extent to which their utility is fully realized. Our study reveals that current end-to-end models tend to underutilize the guidance provided by intermediate modules to the planning stage, reflecting a lack of planning-oriented design. To address this issue, we propose Optimized Multi-Experts Guided Autonomous Driving (OMEGA), a functional integration mechanism that explicitly improves the contribution of intermediate modules to the planning process. Experimental results demonstrate that our approach significantly enhances the functional contribution of each intermediate component. Our findings suggest that performance limitations are not due to the lack of new modules but stem from the underutilization of existing ones, urging a reconsideration of current end-to-end design practices. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 17:00 – 17:10 |

Oral Paper Presentation 4: DinoRADE: Full Spectral Radar-Camera Fusion with Vision Foundation Model Features for Multi-class Object Detection in Adverse Weather

Oral

Christof Leitgeb Graz Uni. of Technology, Austria

AbstractReliable and weather-robust perception systems are essential for save autonomous driving and typically employ multi-modal sensor configurations to achieve comprehensive environmental awareness. While recent automotive FMCW Radar-based approaches achieved remarkable performance on detection tasks in adverse weather conditions, they exhibited limitations in resolving fine-grained spatial details particularly critical for detecting smaller and vulnerable road users (VRUs). Furthermore, existing research has not adequately addressed VRU detection in adverse weather datasets such as K-Radar. We present DinoRADE, a Radar-centered detection pipeline that processes dense Radar tensors and aggregates vision features around transformed reference points in the camera perspective via deformable cross-attention. Vision features are provided by a Dinov3 Vision Foundation Model. We present a comprehensive performance evaluation on the K-Radar dataset in all weather conditions and are among the first to report detection performance individually for five object classes. Additionally, we compare our method with existing single-class detection approaches and outperform recent Radar-camera approaches by 12.1%. Code and trained models will be made publicly available. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 17:10 – 17:20 |

Oral Paper Presentation 5: GaussianDet3D: Bridging Gaussian Splatting and Sparse LiDAR Detection for Multi-View 3D Object Detection

Oral

Malaz Tamim Technical University of Munich (TUM), Germany AbstractReliable and weather-robust perception systems are essential for save autonomous driving and typically employ multi-modal sensor configurations to achieve comprehensive environmental awareness. While recent automotive FMCW Radar-based approaches achieved remarkable performance on detection tasks in adverse weather conditions, they exhibited limitations in resolving fine-grained spatial details particularly critical for detecting smaller and vulnerable road users (VRUs). Furthermore, existing research has not adequately addressed VRU detection in adverse weather datasets such as K-Radar. We present DinoRADE, a Radar-centered detection pipeline that processes dense Radar tensors and aggregates vision features around transformed reference points in the camera perspective via deformable cross-attention. Vision features are provided by a Dinov3 Vision Foundation Model. We present a comprehensive performance evaluation on the K-Radar dataset in all weather conditions and are among the first to report detection performance individually for five object classes. Additionally, we compare our method with existing single-class detection approaches and outperform recent Radar-camera approaches by 12.1%. Code and trained models will be made publicly available. Speaker BioSpeaker bio will be announced closer to the workshop date. |

| 17:20 – 17:30 | Paper Awards Ceremony – Best Paper, Runner-Up, Best Application Paper, Best Poster & Best Keynote |

| 17:30 – 17:40 | Challenge Winner Presentation |

| 17:40 – 17:50 | Challenge Awards Ceremony |

| 17:50 – 18:00 | Closing Remarks & Group Photo |

| 19:00 – 21:00 | Workshop Reception & Networking |

Final schedule, room allocation, and speaker order will be announced closer to the workshop date.